Anxiety rarely arrives on schedule. Usually it shows up late in the evening, in the pause between tasks, catches you off guard right before sleep, or at a moment when there’s no one to text because everyone is busy.

An open appointment with a psychologist might be available only weeks from now, and on top of that, finding “your” specialist requires energy, courage, and quite a bit of money. Against this backdrop, a smartphone looks like the most accessible option: open an app, start chatting, and get comfort right now.

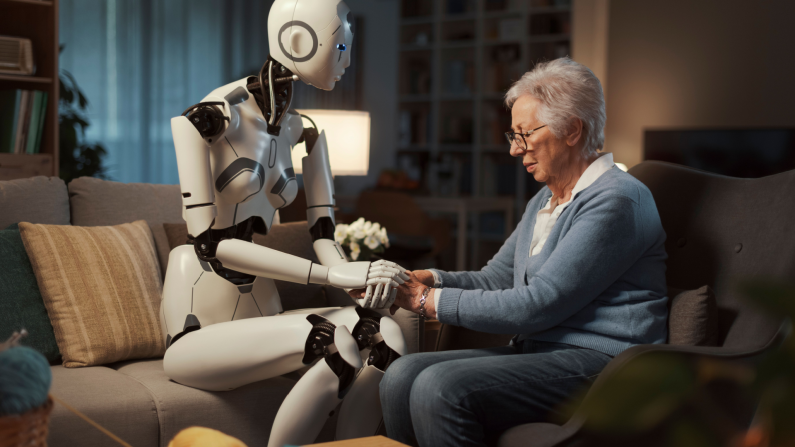

That’s how a new neural-network trend was born — 24/7 psychological support with instant access in the form of an AI therapist! It really is convenient, and most often it’s free, too. No wonder more and more people are turning to chatbots and general-purpose language models for this kind of help. Bots respond quickly, warmly, and almost always say what you need to hear, so many people start to feel like a live therapist is no longer necessary. But is that really so?..

AI Psychotherapy: What’s on the Market Right Now

So, let’s clearly break down why many people choose AI therapists more readily than live humans:

-

Speed and availability. AI responds instantly, with no queues, no scheduling, and no “I just have to hold out until the session.”

-

Low barrier to entry. There’s no need to show your vulnerable sides to a stranger and worry about “how I look from the outside.” Many start with a chat simply because it’s morally easier to take that step.

-

Price. A subscription or a free mode often looks more attractive than regular sessions, whose price (if we’re talking about professional services) usually starts at $100 per hour.

-

A sense of control. You can stop at any moment, choose the tone of the conversation, switch to exercises, ask to have your thoughts structured.

-

Shame and privacy. Paradoxically, some people find it easier to talk “into the void of a screen” than to a live interlocutor. It’s in the same vein as “pouring your heart out to a stranger in a bar” or “telling a taxi driver your whole life story.”

And there wouldn’t be any problems with all of this if not for one BUT: real, live psychotherapy is not built exclusively on empathy and smart questions. It is always mutual contact, shared responsibility, boundaries, and a search for points of connection between therapist and client — which, in turn, often helps to understand more deeply the problem underlying the request. Sometimes medication support is also unavoidable, for example with chronic anxiety disorder or depression. And yet today the market is full of AI tools that really can (or try to) replace a visit to a doctor. They can be divided into several categories.

Apps That Imitate Structured Psychological Support

Most often they rely on cognitive behavioral therapy methods and various assessments: they help you notice automatic thoughts, track distortions, reduce anxiety through exercises, plan steps, and закреплять skills. These services usually don’t just “comfort,” but guide a person through a specific psychotherapy framework. Among such apps, popular ones include:

Wysa. One of the best-known chat helpers with a set of CBT/DBT tools built into the dialogue. Basic communication in Wysa is presented as free, but advanced features and the tool library require a premium version. In user experience it looks like this: you write “I’m anxious,” and in response you get not only empathy, but also a concrete sequence of actions (grounding, breathing, reframing the thought, a plan for the next hour).

Youper. An “emotional assistant” that builds the conversation around exercises and short interventions. The product description includes tags like stress, anxiety, mood, and “science-backed conversations and exercises.” In feel, it’s closer to a structured coach than to a “friend.” This service is paid via subscription, meaning it’s designed for regular rather than one-off use.

AI Companions

These products also provide support, but they aim to imitate live communication without a rigid psychotherapy agenda. For some users this is enough to get through loneliness and reduce anxiety, but vulnerable people can quickly become “hooked” on this kind of interlocutor, which creates separate risks (which we’ll talk about later). Among AI companions:

Replika. One of the best-known companions, positioned as an “empathetic friend / companion.” Replika states that the basic chat remains free, and a subscription opens additional features, including voice calls and expanded communication modes.

Character.AI and other similar roleplay platforms. This is an app-library with a huge number of fictional characters, where there are also fictional representatives of various professions. The special feature is that absolutely any user can create a new character for their personal needs — and you can, too. So there are separate therapist bots here, but you can also pour out your soul and work through problems with a hero from your favorite books, series, or historical era — from Harry Potter all the way to Napoleon.

General-Purpose LLMs (Including ChatGPT)

These are not specialized therapeutic products, but people widely use them as a therapist because the models can maintain a given tone, remember what’s been said, and structure a conversation. They ask clarifying questions, reflect emotions, help sort the situation into pieces, and will try on absolutely any role you choose for them.

The most common scenarios of using ChatGPT-like models “instead of therapy” look like:

-

to vent, especially at night or when close people are far away;

-

to break down anxiety and tangled thoughts point by point;

-

to fill out a CBT template like a thought diary;

-

to rehearse a difficult conversation with a partner, parents, or a boss;

-

to make a plan for the day, week, or a plan for solving a specific task.

How to Correctly Use AI for Psychological Help

If you remember that AI is only a temporary, narrowly targeted tool — not a panacea — then even here, with a need for psychotherapeutic help, it can be useful. The key is to phrase requests so that the model does what it’s actually strong at: structuring, offering options, asking questions, helping with a plan and exercises — rather than “diagnosing” and taking on the role of a medical professional. AI has indeed proven itself especially well in CBT. It also fits for “unloading” emotions, venting and structuring thoughts, as well as preparing for real actions and dispelling fears connected with that.

Here’s what matters to agree on with the bot right away and how to set the boundaries of interaction depending on your situation, so you can use it as effectively as possible and without harm to your psyche:

-

Initial definition of role and approach: “Please respond like a psychologist who works in the CBT approach. Do not make diagnoses and do not give medical recommendations. Ask clarifying questions and help me structure my thoughts.”

-

If you use it as a thought diary: “Help me fill out a thought diary: 1) situation, 2) automatic thought, 3) emotions (0–100), 4) evidence ‘for’ and ‘against,’ 5) alternative thought, 6) action for the next hour.”

-

For working with anxiety: “I will describe what happened. Your task: separate facts from interpretations, note possible cognitive distortions, and suggest 2–3 alternative explanations without invalidating.”

-

For self-support: “Make a plan for the next 2 hours to reduce anxiety. The plan should be realistic, with steps of 5–10 minutes.”

-

As a rehearsal for an important conversation you’re nervous about: “Play the role of my boss/partner. They have this-and-this personality. I want to role-play a conversation on this topic. After each of my messages, respond briefly and plausibly according to the role, and then suggest how I could respond so it would be more correct and clear.”

It’s also advisable to forbid it right away from giving you any medical recommendations and to emphasize that it should offer you options (possible interpretations) rather than making a diagnosis or asserting something with 100% certainty. As a bonus, you can ask it to independently track “red flags” when you need to see a live specialist, so you don’t miss that moment.

And When Do You Still Need a Live Specialist?

Just “talking to a bot” is not enough or is risky when:

-

your condition lasts longer than a month and worsens, there are severe sleep/appetite disturbances, panic attacks become frequent, and your quality of life continuously declines;

-

there are thoughts of self-harm or suicide;

-

symptoms appear that resemble psychosis (delusional beliefs, auditory or visual hallucinations);

-

you notice dependence on the chat and realize you are avoiding real contacts.

Where Support Ends and Risk Begins: 4 Red Zones

An AI chat can become a quick way for you to get through a difficult moment: spill out thoughts, calm down a bit, assemble a coherent plan for the next few hours. But this kind of support has a limit. Here are the risks that are important to keep in mind:

-

Increased risks of using AI for teenagers. This is because the teenage psyche combines sensitivity, impulsivity, and a need for acceptance, so an “ideal interlocutor” quickly becomes significant. Some studies already record an incredible scale of AI companion use by teenagers and the associated safety problems, which is why some platforms even introduce restrictions for minors. History also knows several unpleasant cases where a teenager became so susceptible to AI influence that they began to carry out its actions (for example, one user of Chatacter AI killed himself because the Daenerys Targaryen chatbot agreed to it).

-

AI’s tendency toward hallucinations and pseudo-confidence. Bots are not perfect. A model can sound convincing even when it’s wrong, and throw out “psychological” labels and explanations that don’t even exist in nature.. In psychotherapy, this can lead to an even greater distortion of perception and mental errors.

-

Therapy substitution: the “I feel better, so everything is OK” effect. A chat really can reduce anxiety here and now, because conversation itself regulates emotions. But relief can become a trap: a person delays seeking a specialist even though the problem requires long-term work or medical intervention.

-

Questionable confidentiality and digital traces. A conversation “like with a therapist” almost always contains sensitive data: trauma, sexuality, addictions, conflicts, thoughts of self-harm. A therapist has professional and legal boundaries they cannot step outside, while apps have only code that can be hacked or changed.

Nevertheless, AI assistants have already become a normal part of psychological hygiene: with them it’s easier to start a conversation about yourself, faster to sort anxiety into parts, and not to remain alone in a difficult moment. If you use such tools consciously — for support, skills, and structuring, rather than instead of diagnosis and treatment — they really can work as a convenient “first line.” And this is perhaps the main plus of the new era: help has become closer. And where the stakes are high, you should always have a plan B — a live specialist and real support, which no chat has yet replaced.

Share this with your friends!

Be the first to comment

Please log in to comment