How neurointerfaces allow you to fight disease by the power of thought The RBC St. Petersburg award was held on December 5th, 2018. This is an award in the field of businesses which aims to promote civilized...

How neurointerfaces allow you to fight disease by the power of thought

The RBC St. Petersburg award was held on December 5th, 2018. This is an award in the field of businesses which aims to promote civilized business. Konstantin Sonkin, the founder of iBrain, won the award for «Best Innovator». Under his leadership, a team of scientists, doctors, engineers, and programmers developed and began the practical application of technology that helps paralyzed patients recover their lost brain function. They did this through the use of an external neural interface — an apparatus designed for reading thoughts, literally.

From cyberbulls to cyberlimbs

Terms with the prefix «neuro» are increasingly in the news, blogs, and articles on modern technology. Neural networks, neuro charts, neuro copywriting — the future is already knocking on the door... More precisely, right on the brain.

The concept of «neurointerface» includes a whole family of technologies whose purpose is to facilitate the exchange of information between the brain and an electronic device. The ancestor of modern neurointerfaces, the stimoceiver, was invented back in the 1950’s by Yale University professor José Manuel Rodriguez Delgado. In 1963, using a stimoceiver which was implanted in the brain of a Spanish fighting bull, Delgado forced the attacking animal to stop and then run in circles around the arena.

As time passed, neurointerfaces began to be used in medicine more and more. Especially in the recovery of hearing, vision, and limb mobility. Most of these devices are invasive, that is, they require direct placement into the brain through surgery. For example, cochlear implants are placed in the inner ear of deaf people and transmit sound signals to the brain.

A neural interface that was designed to help paralyzed people was first used in 1998 by Philip Kennedy, a neurologist from the United States. The musician Johnny Ray, who had a stroke, was able to move a cursor on a computer monitor by the power of thought with the help of electrodes implanted into his brain This experiment gave Kennedy the title of «Father of cyborgs». Later, he developed a system that allowed a person who was completely paralyzed to transfer sounds and individual words to a computer, simply by «saying» them mentally.

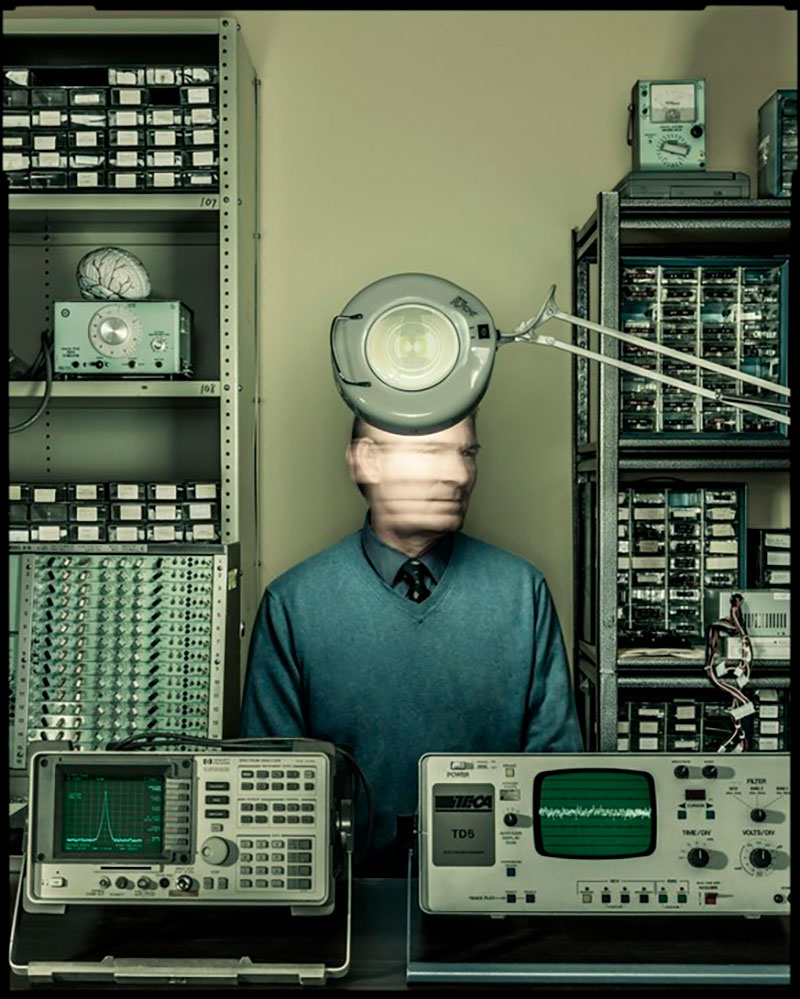

Philip Kennedy — The father of cyborgs. Photo: Dan Winters

Philip Kennedy — The father of cyborgs. Photo: Dan Winters

A qualitative leap in the development of neurophysiology and neurointerfaces occurred in the 2000’s. Implants in the brain could no longer only move a cursor around a screen, but paralyzed patients could now turn household appliances on and off, play computer games, and most importantly they could control their artificial limbs. In 2012 a participant in the experiment, who lost the ability to move 15 years ago, was able to drink a cup of coffee using a brain-controlled robotic arm.

These developments are advancing more and more every year. In 2017, a group of scientists from different countries conducted tests at the University of Tübingen for people who cannot move their eyes. The equipment allowed them to answer «yes» or «no» to the questions asked. And in 2018, American scientists, under the leadership of Jamie Henderson, created a special invasive neurointerface to control a tablet. One of the subjects was able to make a purchase at an online store during the experiment .

But it is especially important to note the emergence of non-invasive (external) devices, which have made it possible to forego dangerous operations on the brain.

Electrical Translator

Currently there are external neural interfaces that read different types of signals. For example, spectroscopy can be used for these purposes — i.e. recognition of the activity of the cortex based on the intensity of blood flow in it. Some instruments record and interpret movements of the patient’s eyes. But the most impressive results were obtained using electroencephalography, that is, EEG headsets.

Electroencephalography is a diagnostic method that many of us know from personal experience. EEG allows you to register the bioelectric activity of the brain using electrodes that are in contact with the scalp. This method has been widely used in medicine to recognize epileptic activity and other pathologies. Also, to establish the causes of neurological problems and evaluate the results of treatment.

The basics of using EEG in neural interfaces is the same as in diagnosis. Our brain consists of neurons that are able to transmit electrical impulses to each other. Various stimuli cause one area of the brain or another to be activated. The task of the researcher is to establish a clear link between the type of stimulus and a specific area of the cerebral cortex.

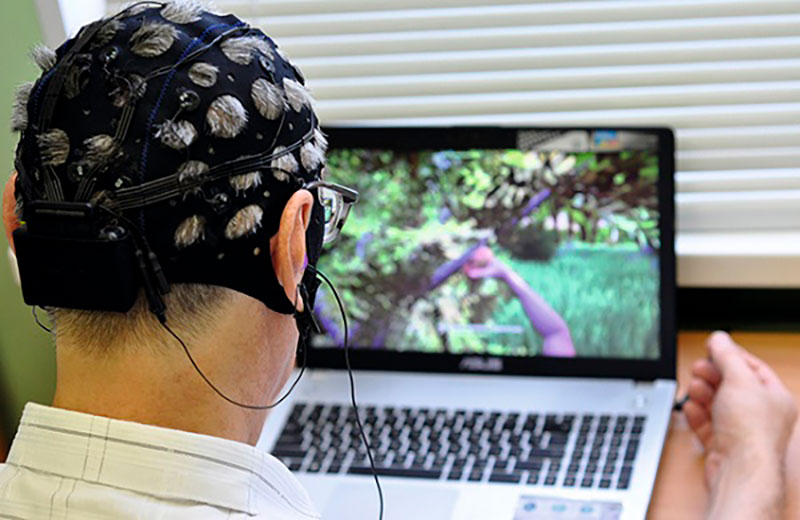

Electroencephalography

It is surprising that raising a hand or imagining that you are raising a hand are almost identical actions from the point of view of the brain. The same group of neurons is activated when you hold a ball in your hand and when you imagine how you are holding the ball. During the development of the neurointerface, the subjects repeatedly repeated a certain set of actions in their mind and the scientists recorded and analyzed the EEG data. A program was then created that could decipher these mental signals and transmit them to a computer. The results were impressive. The computer, could recognize a certain signal and it also could transmit the signal further to an artificial limb, an exoskeleton, a myomuscular stimulation apparatus, or virtual reality equipment. Thus, a paralyzed person would be able to control mechanical devices, a wheelchair, his own limbs, and fingers or any software interface for work, communication, and entertainment.

Movement for life

Helping a person to move and communicate is a very important task. But behind the development of these hardware and software tools it is easy to forget that their lost physical capabilities can often be returned, even if not completely. The most striking example is the rehabilitation of patients after a stroke. A stroke is an acute interruption of cerebral circulation, leading to the damage or death of neurons. In Russia, more than 400,000 strokes are recorded annually. About half of stroke survivors are unable to move. As a rule, rehabilitation is reduced to physiotherapeutic procedures and not all patients return to a normal life. What about those who are not helped by traditional methods?

The start-up iBrain, from which our story began, is addressing this particular problem. The neural interface developed by Konstantin Sonkin and his colleagues is a compact electroencephalograph plus special software that receives and decodes signals from it. The rehabilitation process is a kind of computer game: a paralyzed person, imagining the movement of a hand, moves the hand of his virtual «double» on the monitor.

iBrain in action. Photo: https://i-brain.tech

iBrain in action. Photo: https://i-brain.tech

The result, which can be seen — is the key moment! First of all, it gives a powerful incentive for others to not give up and move on. In this case, the doctor relies on the natural possibilities of the human body. Constant stimulation of the brain areas that are responsible for controlling movements leads to partial restoration of the connections between neurons. The functions of the dead cells are assumed by the neighboring ones.

Similar systems exist abroad. In 2015, University of California specialists combined a neural interface with a neuromuscular simulation device. As a result, a person paralyzed from the waist down was able to take steps on a special suspension, controlling his legs with the help of mental signals. To prepare, the subject trained to use his virtual avatar on a computer monitor.

And in 2017, a method of restoring hand functions after a stroke, based on the same principles, was tested in Australia — only the feedback was not visual, but tactile, in the form of a special moving palm pad. After nine weeks the motor functions of the subjects improved by 36%.

The main differences from the development of iBrain and its foreign counterparts is the low cost and the possibility of using it at home. Equipment costs hundreds of thousands of rubles but, according to Konstantin Sonkin, it is still ten times less than the prices of such systems abroad. A literal pocket size electroencephalograph was specially developed for the project. And this means that you can work with it anywhere — including at home, immediately after coming home from the hospital. After all, the earlier the rehabilitation process is started, the greater the chances for the return of mobility.

Game interface of iBrain. Photo: https://i-brain.tech

Game interface of iBrain. Photo: https://i-brain.tech

What’s next?

iBrain is continuing its work and its equipment is now being used to help people who have suffered a stroke. Future plans include the development of a system for direct control of external devices (manipulators and robotic assistants), as well as the creation of gaming environments controlled by brain signals. Obviously, the day is not far off when neural interfaces from complex medical areas will be used in everyday life. Neuro messengers, neuro smartphones, and neuro consoles — why not?

Share this with your friends!

Be the first to comment

Please log in to comment